Imagine a world where chatbots can access every minor piece of data for you instantly within seconds accurately according to your questions. Artificial Intelligence has progressed from day one and continues to adapt and evolve with time for development. AI models are going beyond generating text and are constantly being trained to excel in every field with various functions and work as virtual assistants or helping hands to humans. They can actively research for required information and take relevant actions. This is where the Retrieval-Augmented Generation(RAG) comes in, it’s a game-changer in the world of natural language processing (NLP). Before that you should know what is retrieval augmented generation, Combining the strength of information with generating text to create even more informative and accurate data is the technique used by RAG.

What is Retrieval-Augmented Generation (RAG)

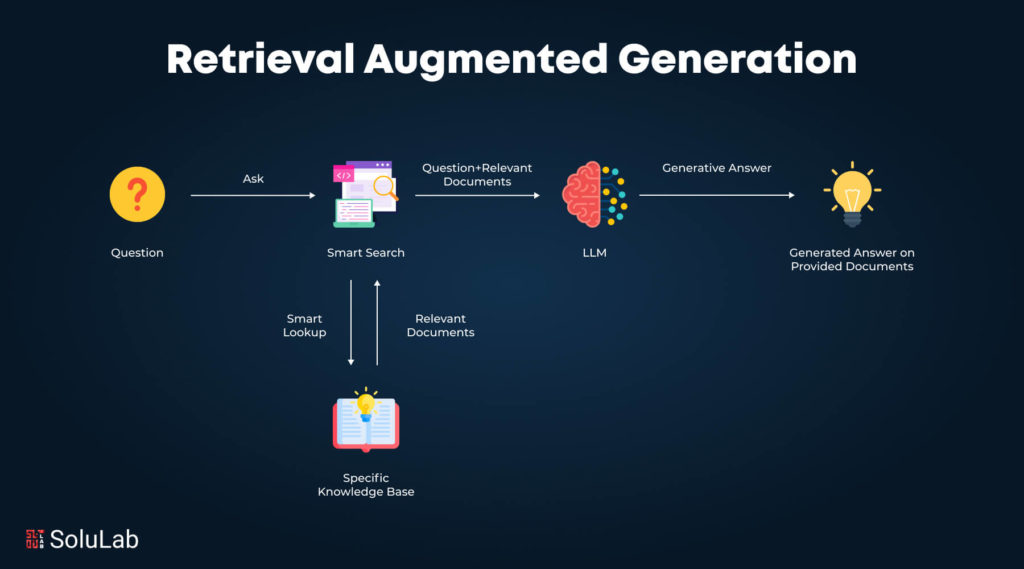

Retrieval-augmented generation is a technique that combines generating texts and information retrieval to create more accurate and informative content. But how exactly does it work? It works by retrieving significant information from a database or external source and using it to generate text. To better understand the workings of rag models look at their components:

- Large Language Model (LLM): This Artificial Intelligence giant can already participate in question-answering, language translation, and even text generation. From rag retrieval augmented, it gets a very important increase in accuracy which is critical.

- Information Retrieval System: This part works like a superhero’s search engine to look for the most appropriate data that could be of essence to the LLM.

- Knowledge Base: RAG gets its information from this reliable source. Perhaps it could be a large-scale external resource or a database of a certain specific focus.

Why is Retrieval Augmented Generation Required?

Retrieval-augmented generation (RAG) is required to address the limitations of language models and help them generate a more accurate and informative response. Here are some reasons for which RAG is required:

1. Enhancing Factual Accuracy

Traditional language models have limited context windows, which means they are only able to provide a small amount of text at a time. RAG ensures that the text provided is highly accurate according to the real-time data making the data a reliable output.

2. Improving Relevance

RAG always retrieves relevant information from a knowledge base and also ensures that the generated text is relevant to the user’s query or command. This is extremely crucial when a task demands factual accuracy.

3. Expanding Knowledge

LLM retrieval augmented generation has a limited database of knowledge only as per what they are trained on. RAG allows them to access a vast base of information, expanding their knowledge and enabling them to handle more complex tasks.

4. Enhanced Explainability

RAG gives access to a mechanism that explains the reasoning of the model. This is made possible by showing retrieved information, so users can understand how the model arrived at a response, and also increases trust and transparency.

The Synergy of Retrieval Based and Generative Models

RAG plays the role of the bridge between these two methods. In leveraging the abilities of both. Whereas generative models inspire the model, the information of the model is supplied by the retrieval models.

- Retrieval-Based Models

Suppose you are the librarian specializing in a given area of knowledge. Similar procedures are involved in models based on retrieval augmented generation rag impaired working leads to concurrent memory that is explicit and completed during retrieval. They heavily use question-and-answer templates to solve problems and collect information. This ensures coherence and accuracy of the information as well as accuracy, especially for tasks with definite solutions.

Despite this, non-interactive models of retrieval have their limitations as well. They experience a problem in asking queries that have not been provided in the training or handling new circumstances not within the training regimen.

- Generative Models

On the other hand, generative models are playbook champions when it comes to the creation of new languages. They employ complex techniques of deep learning to analyze large amounts of textual content to identify the most basic forms and structures of language. This enables them to translate human languages and come up with new text forms, and in general to produce other forms of original literature. They are adaptable to situations and good when it comes to a shift in new scenarios.

However, contrary to this, generative models can sometimes trigger factual inaccuracy most of the time. Without that, their responses could be creative but incorrect, or as some individuals say, full of hot air.

The Role of Language Models and User Input

In retrieval augmented generation applications language models and user inputs play a crucial role. Here’s how:

1. Boosting Creativity

LLMs can compose unique texts, translate from one language to another, as well as write different kinds of materials, be it code or poetry. The input provided by the user acts as a signal which then guides the creative process of the rag agent LLM towards the appropriate path.

2. Personalized Interactions

It hard codes practical user communications, while LLMs have the added capability to tailor connecting reactions based on what LLMs tumble from users. Take a chatbot for instance one that can remember your previous chats and the kind of responses you would like to have.

3. Increasing Accuracy

It must also be noted that LLMs applications are continuously in the developmental process and acquiring knowledge. Reviews made by the users, especially the constructive ones assist in enhancing their understanding of language and their response correctness.

4. Guiding Information Retrieval

User input is incorporated in RAG systems commonly in the form of queries. It guides the information retrieval system to the most relevant information that was of concern to the formulation of the LLM.

5. Finding New Uses

Consequently, the users might bring to the LLM’s attention some situations and challenges, it was not acquainted with before. This could push LLMs to the extent of what they can achieve and result in identifying other possibilities in their utility.

Understanding External Data

Retrieval Augmented Generation (RAG) is not an ordinary assembly of articles; instead, it is a chosen collection of credible sources to substantiate the existence of RAG’s ability. Here’s how important external data is to RAG:

-

Knowledge Base

Therefore, RAG relies mainly on external data as a type of knowledge. This might be exemplified by databases, news archives, scholarly articles, and an organization’s internal knowledge database.

-

Accuracy Powerhouse

The LLM Operating Model also incorporates features that ensure that its answers to RAG are factual The LLM’s Operating Model feeds it with relevant data. This becomes very crucial for providing answers to questions and formulating information.

-

Keeping Up to Date

Unlike static large language models, RAG utilizes external data to get the most up-to-date information externally. This ensures the timely responsiveness of RAG’s replies by the contemporary world.

-

The Value of Excellence

This means that it is important to realize that RAG’s answers are highly sensitive to the quality of the external data. Defects in the source of the data such as inaccuracies or bias may become apparent in the text.

Benefits of Retrieval Augmented Generation

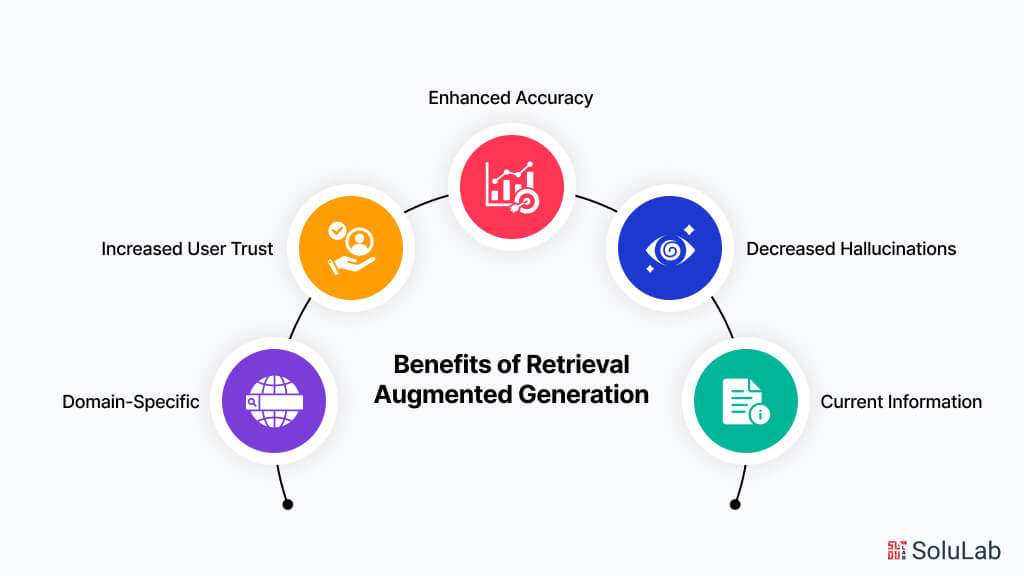

Among gathering data from a larger database knowledge and giving the most informative and accurate results there are many other benefits associated with RAG systems. Here are the benefits of retrieval augmented generation:

1. Enhanced Accuracy

It must be mentioned that factual inconsistency, a major problem in LLMs, is addressed substantially by RAG. RAG ensures that there is an improvement in the accuracy of the response the LLM makes and factual veracity by relying on facts from outside the text.

2. Decreased Hallucinations

It might be interesting, which thus occasionally arises from the LLMs’ ability to generate false hallucinations. Thus, due to the prevention of such actions, the verification process that the company employs at RAG by utilizing the recovered data offers more reliable and credible results.

3. Current Information

In this case, RAG employs the utilization of external data to acquire the most updated data as it is a quite different approach from the LLMs trained within the datasets. This ensures that the generated answers are relevant and recent to sufficiently meet the needs of the users.

4. Increased User Trust

This, it turns out, enhances the credibility of users to get information from RAG since one can support his arguments with sources. For an application like a customer service chatbot where reliability and credibility are paramount this is important.

5. Domain-Specific

Expertise In this way, RAG helps to define the system in particular domains with the help of pertinent external data sources. This enables RAG to provide solutions that demonstrate the correctness and competency of the subject matter.

Approaches in Retrieval Augmented Generation

RAG System leverages various approaches to combine retrieval and generation capabilities. Here are the approaches to it:

- Easy

Produce the required documents and seamlessly integrate the resulting documents into the generation process to ensure the proper coverage of the questions.

- Map Reduce

Assemble the outcome from the individual responses generated for every document as well as the knowledge obtained from many sources.

- Map Refine

With the help of the iteration of answers, it is possible to improve the answers during the consecutive usage of the first and the following documents.

- Map Rerank

Accuracy and relevance should be given the first precedence for response ranking, and then the highest-ranked response should be selected as the final response.

- Filtering

Employ the models to look for documents, and utilize those that the results contain as context to generate solutions that are more relevant to the context.

- Contextual Compression

This eliminates the problem of information abundance by pulling out passages, which contain answers and provide concise, enlightening replies.

- Summary-Based Index

Employ the use of document summaries, and index document snippets, and generate solutions using relevant summaries and snippets to ensure that the answers provided are brief but informative.

- Prospective Active Retrieval Augmented Generation

Find how to call phrases in order first, to find the relevant texts, and second, to refine the answers step by step. Flare provides a conditionally coordinated and dynamic generation process.

Applications of Retrieval Augmented Generation

Now that you are aware of what is retrieval augmented generation and how it works here are the applications of RAG for a better understanding of how is it used:

1. Smarter Q&A Systems

RAG enhances Q&A systems by providing good content from scholarly articles or instructional content. This ensures that the answers are accurate, comprehensive, and informative retrieval augmented generation applications.

2. Factual and Creative Content

RAG can generate diverse creative textual forms including, for example, articles or advertisements. But it does not stop here. This way, the content of RAG is properly matched with the topic, and the information recovered is fact-based.

3. Real-World Knowledge for Chatbots

RAG allows chatbots to source and employ actual world data when in a conversation with people. RAG can be invoked by chatbots in customer service where information bundles can be accessed with the chatbot then providing accurate and helpful replies.

4. Search Outcomes Gain an Advantage

The refinement of the supplied documents and an enhancement of the matching process allow for the betterment of information retrieval systems as used by RAG. It transcends keyword search as documents that bear information necessary for a topic are located and educative snippets are provided to the user that capture the essence of the topic set and retrieval augmented generation applications.

5. Empowering Legal Research

RAG can be helpful to legal practitioners in that it aids in the process of research and analysis in some ways. There is a possibility that through RAG, attorneys can gather all the related case studies papers, and other records to support their case.

6. Personalized Recommendations

The integration of outside facts gives RAG additional opportunities to present user preferences in a matter that considers external input. For example, let RAG be applied in a movie recommender system where it not only provides movies from the user’s favorite genre but also special emphasizes the movies with the same genre

How is Langchain Used for RAG?

It is worth noticing that langchain retrieval augmented generation plays the role of the assembler that links together the elements of the RAG app development system. It helps with the RAG process in the following ways. Have a look at langchain retrieval augmented generation:

-

Data Wrangling

External data sources are initially under the control of RAG, making it clear that LangChain helps in this case. The benefits include tools for processing, presenting, and checking data for consumption by the LLM.

-

Information Retrieval Pipeline

LangChain is in charge of data retrieval. The user input interacts with the chosen information search system; for instance, a search or knowledge engine to find the most relevant material.

-

LLM Integration

LangChain is the middleman responsible for the data that is gathered and the LLM. Before passing the recovered data to the LLM for generation, it formats it, it might even summarize it or rewrite it in some manner.

-

Prompt Engineering

Depending on the LLM, the following prompts can be generated with LangChain. Arriving at a crisp and informative response for the LLM, LangChain combines data from the gathered material with the user question.

-

Modular Design

To start with, it is worth noting that LangChain is modular by its design. With regards to the RAG procedure, the developers can swap some components and reinvent the procedure that is needed. Due to this characteristic, RAG systems can be developed for specific objectives or goals.

The Future of RAG and LLMs

Language processing is undergoing a massive change with large language models and retrieval-augmented generation. Here’s a look at how the future may benefit from them:

1. Improved Factual Reasoning

The number of discovered relations will increase as well as the ability of LLMs to determine the relationships between the multiple pieces of information, and, therefore, provide more elaborate and thoughtful answers.

2. Multimodal Integration

Currently, RAG can be done as a text-based method, but there is scope that in the future, it can be combined with modes such as audio or visuals. The picture is an instrument that acquires related motion pictures alongside textual content information, which makes it possible for LLMs to offer significantly far more elaborated and encompassing innovative responses.

3. LLMs for Lifelong Learning

The current LLMs are trained with static datasets. As a result, despite the deficiencies of the LLMs’ responses when interacting with the RAG systems in the present, the integration may be able to expand the models’ learning processes in the future to improve response time and data storage.

4. Explanation and Justification

Retrieved information sources can enable LLMs to provide not only an answer to a given question but also to provide the reasoning behind it, through RAG systems. This will in turn help in enhancing the confidence of users in products being developed by AI.

5. Democratization of AI

Changes may occur in both RAG and LLMs, and people may get access to tools that can make using AI for actions such as research and writing articles easy and friendly.

Final Words

Retrieval Augmented Generation RAG is a leap forward in natural language processing, it bridges the gap between vast databases and language models. RAG empowers users to access and have a deep understanding of information more efficiently and correctly. RAG has its approaches and benefits that make it a better choice for users in the long term.

With ongoing research and new techniques being explored now and then the future of RAG stands strong in technology. You can expect more powerful RAG systems that will have the ability to transform interactions with technology and adhering information to access knowledge that will help with creating greater insights with ease and accuracy.

As an AI development company, SoluLab specializes in implementing cutting-edge technologies like RAG to create innovative and efficient AI solutions tailored to your business needs. Our team of experts is dedicated to delivering custom AI applications that enhance your operations, improve customer interactions, and drive business growth. Ready to harness the power of RAG for your business? Contact SoluLab today to explore how we can help you leverage AI to achieve your goals. Let’s innovate together!

FAQs

1. What Retrieval Augmented Generation?

The elements of RAG AI technology are classified into two categories, namely, the retrieval phase and the generation phase. It begins by extracting relevant information from external sources, documents, or databases of the organization. Subsequently, it employs this data to formulate an answer such as a text or an answer to a posed question.

2. How are the limitations of LLMs being addressed by RAG

LLMs tend to be easily distracted at times and can also give out wrong facts. This is catered by RAG, which ensures the LLM has real data when it is generating the replies, this ensures that the replies that the LLM sends are more dependable and relevant.

3. What challenges are being experienced by RAG?

We know that developing RAG models is an effective tool, but it is not unconstructive to recall that such models are not without limits. Another problem is ensuring that the material that is obtained is relevant. The other is that the model does not search for information in a recursive way; that is, it cannot build an improved search plan from the initial results. Gentlemen are at the moment involved in research on how to overcome the above constraints.

4. What are some of the real-life applications of RAG?

RAG has potential use in the following. It also has the potential to create smarter virtual assistants and chatbots, increase the volume of content being created for authors and marketers, and refine how firms deliver customer support.

5. How can SoluLab assist you with the implementation of RAG?

SoluLab can assist with RAG implementation for your business by structuring the data and indexing, helping you choose the right retrieval and generation model, and integrating your RAG system with applications and workflows. With this SoluLab can help you build an effective RAG system.